Restore to Mayastor

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Step 1: Install Velero with GCP Provider on Destination (Mayastor Cluster)

Install Velero with the GCP provider, ensuring you use the same values for the BUCKET-NAME and SECRET-FILENAME placeholders that you used originally. These placeholders should be replaced with your specific values:

velero install --use-node-agent --provider gcp --plugins velero/velero-plugin-for-gcp:v1.6.0 --bucket BUCKET-NAME --secret-file SECRET-FILENAME --uploader-type resticCustomResourceDefinition/backuprepositories.velero.io: attempting to create resource

CustomResourceDefinition/backuprepositories.velero.io: attempting to create resource client

CustomResourceDefinition/backuprepositories.velero.io: created

CustomResourceDefinition/backups.velero.io: attempting to create resource

CustomResourceDefinition/backups.velero.io: attempting to create resource client

CustomResourceDefinition/backups.velero.io: created

CustomResourceDefinition/backupstoragelocations.velero.io: attempting to create resource

CustomResourceDefinition/backupstoragelocations.velero.io: attempting to create resource client

CustomResourceDefinition/backupstoragelocations.velero.io: created

CustomResourceDefinition/deletebackuprequests.velero.io: attempting to create resource

CustomResourceDefinition/deletebackuprequests.velero.io: attempting to create resource client

CustomResourceDefinition/deletebackuprequests.velero.io: created

CustomResourceDefinition/downloadrequests.velero.io: attempting to create resource

CustomResourceDefinition/downloadrequests.velero.io: attempting to create resource client

CustomResourceDefinition/downloadrequests.velero.io: created

CustomResourceDefinition/podvolumebackups.velero.io: attempting to create resource

CustomResourceDefinition/podvolumebackups.velero.io: attempting to create resource client

CustomResourceDefinition/podvolumebackups.velero.io: created

CustomResourceDefinition/podvolumerestores.velero.io: attempting to create resource

CustomResourceDefinition/podvolumerestores.velero.io: attempting to create resource client

CustomResourceDefinition/podvolumerestores.velero.io: created

CustomResourceDefinition/restores.velero.io: attempting to create resource

CustomResourceDefinition/restores.velero.io: attempting to create resource client

CustomResourceDefinition/restores.velero.io: created

CustomResourceDefinition/schedules.velero.io: attempting to create resource

CustomResourceDefinition/schedules.velero.io: attempting to create resource client

CustomResourceDefinition/schedules.velero.io: created

CustomResourceDefinition/serverstatusrequests.velero.io: attempting to create resource

CustomResourceDefinition/serverstatusrequests.velero.io: attempting to create resource client

CustomResourceDefinition/serverstatusrequests.velero.io: created

CustomResourceDefinition/volumesnapshotlocations.velero.io: attempting to create resource

CustomResourceDefinition/volumesnapshotlocations.velero.io: attempting to create resource client

CustomResourceDefinition/volumesnapshotlocations.velero.io: created

Waiting for resources to be ready in cluster...

Namespace/velero: attempting to create resource

Namespace/velero: attempting to create resource client

Namespace/velero: created

ClusterRoleBinding/velero: attempting to create resource

ClusterRoleBinding/velero: attempting to create resource client

ClusterRoleBinding/velero: created

ServiceAccount/velero: attempting to create resource

ServiceAccount/velero: attempting to create resource client

ServiceAccount/velero: created

Secret/cloud-credentials: attempting to create resource

Secret/cloud-credentials: attempting to create resource client

Secret/cloud-credentials: created

BackupStorageLocation/default: attempting to create resource

BackupStorageLocation/default: attempting to create resource client

BackupStorageLocation/default: created

VolumeSnapshotLocation/default: attempting to create resource

VolumeSnapshotLocation/default: attempting to create resource client

VolumeSnapshotLocation/default: created

Deployment/velero: attempting to create resource

Deployment/velero: attempting to create resource client

Deployment/velero: created

DaemonSet/node-agent: attempting to create resource

DaemonSet/node-agent: attempting to create resource client

DaemonSet/node-agent: created

Velero is installed! ⛵ Use 'kubectl logs deployment/velero -n velero' to view the status.

thulasiraman_ilangovan@cloudshell:~$ Step 2: Verify Backup Availability

Check the availability of your previously-saved backups. If the credentials or bucket information doesn't match, you won't be able to see the backups:

velero get backup | grep 13-09-23mongo-backup-13-09-23 Completed 0 0 2023-09-13 13:15:32 +0000 UTC 29d default <none>kubectl get backupstoragelocation -n veleroNAME PHASE LAST VALIDATED AGE DEFAULT

default Available 23s 3m32s trueStep 3: Restore Using Velero CLI

Initiate the restore process using Velero CLI with the following command:

Step 4: Check Restore Status

You can check the status of the restore process by using the velero get restore command.

When Velero performs a restore, it deploys an init container within the application pod, responsible for restoring the volume. Initially, the restore status will be InProgress.

Step 5: Backup PVC and Change Storage Class

Retrieve the current configuration of the PVC which is in

Pendingstatus using the following command:

Confirm that the PVC configuration has been saved by checking its existence with this command:

Edit the

pvc-mongo.yamlfile to update its storage class. Below is the modified PVC configuration withmayastor-single-replicaset as the new storage class:

Step 6: Resolve issue where PVC is in a Pending

Begin by deleting the problematic PVC with the following command:

Once the PVC has been successfully deleted, you can recreate it using the updated configuration from the

pvc-mongo.yamlfile. Apply the new configuration with the following command:

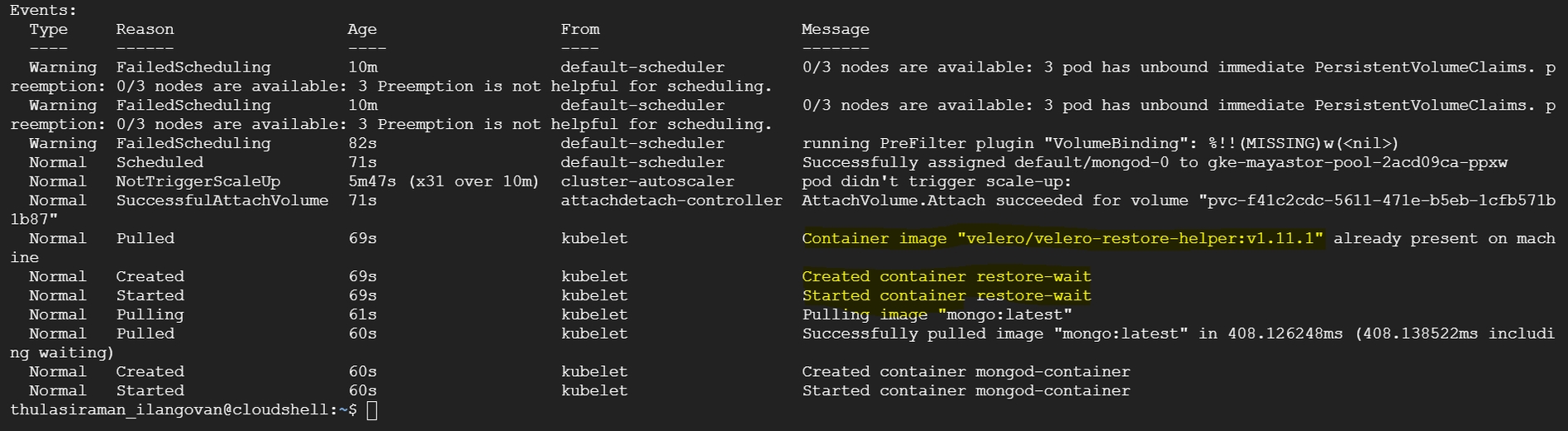

Step 7: Check Velero init container

After recreating the PVC with Mayastor storageClass, you will observe the presence of a Velero initialization container within the application pod. This container is responsible for restoring the required volumes.

You can check the status of the restore operation by running the following command:

The output will display the pods' status, including the Velero initialization container. Initially, the status might show as "Init:0/1," indicating that the restore process is in progress.

You can track the progress of the restore by running:

You can then verify the data restoration by accessing your MongoDB instance. In the provided example, we used the "mongosh" shell to connect to the MongoDB instance and check the databases and their content. The data should reflect what was previously backed up from the cStor storage.

Step 8: Monitor Pod Progress

Due to the statefulset's configuration with three replicas, you will notice that the mongo-1 pod is created but remains in a Pending status. This behavior is expected as we have the storage class set to cStor in statefulset configuration.

Step 9: Capture the StatefulSet Configuration and Modify Storage Class

Capture the current configuration of the StatefulSet for MongoDB by running the following command:

This command will save the existing StatefulSet configuration to a file named sts-mongo-original.yaml. Next, edit this YAML file to change the storage class to mayastor-single-replica.

Step 10: Delete StatefulSet (Cascade=False)

Delete the StatefulSet while preserving the pods with the following command:

You can run the following commands to verify the status:

Step 11: Deleting Pending Secondary Pods and PVCs

Delete the MongoDB Pod mongod-1.

Delete the Persistent Volume Claim (PVC) for mongod-1.

Step 12: Recreate StatefulSet

Recreate the StatefulSet with the Yaml file.

Step 13: Verify Data Replication on Secondary DB

Verify data replication on the secondary database to ensure synchronization.

Last updated

Was this helpful?