Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

When a node allocates storage capacity for a replica of a persistent volume (PV) it does so from a DiskPool. Each node may create and manage zero, one, or more such pools. The ownership of a pool by a node is exclusive. A pool can manage only one block device, which constitutes the entire data persistence layer for that pool and thus defines its maximum storage capacity.

A pool is defined declaratively, through the creation of a corresponding DiskPool custom resource on the cluster. The DiskPool must be created in the same namespace where Mayastor has been deployed. User configurable parameters of this resource type include a unique name for the pool, the node name on which it will be hosted and a reference to a disk device which is accessible from that node. The pool definition requires the reference to its member block device to adhere to a discrete range of schemas, each associated with a specific access mechanism/transport/ or device type.

spec.disks under DiskPool CROnce a node has created a pool it is assumed that it henceforth has exclusive use of the associated block device; it should not be partitioned, formatted, or shared with another application or process. Any pre-existing data on the device will be destroyed.

A RAM drive isn't suitable for use in production as it uses volatile memory for backing the data. The memory for this disk emulation is allocated from the hugepages pool. Make sure to allocate sufficient additional hugepages resource on any storage nodes which will provide this type of storage.

To get started, it is necessary to create and host at least one pool on one of the nodes in the cluster. The number of pools available limits the extent to which the synchronous N-way mirroring (replication) of PVs can be configured; the number of pools configured should be equal to or greater than the desired maximum replication factor of the PVs to be created. Also, while placing data replicas ensure that appropriate redundancy is provided. Mayastor's control plane will avoid placing more than one replica of a volume on the same node. For example, the minimum viable configuration for a Mayastor deployment which is intended to implement 3-way mirrored PVs must have three nodes, each having one DiskPool, with each of those pools having one unique block device allocated to it.

Using one or more the following examples as templates, create the required type and number of pools.

The status of DiskPools may be determined by reference to their cluster CRs. Available, healthy pools should report their State as online. Verify that the expected number of pools have been created and that they are online.

Mayastor dynamically provisions PersistentVolumes (PVs) based on StorageClass definitions created by the user. Parameters of the definition are used to set the characteristics and behaviour of its associated PVs. For a detailed description of these parameters see . Most importantly StorageClass definition is used to control the level of data protection afforded to it (that is, the number of synchronous data replicas which are maintained, for purposes of redundancy). It is possible to create any number of StorageClass definitions, spanning all permitted parameter permutations.

We illustrate this quickstart guide with two examples of possible use cases; one which offers no data redundancy (i.e. a single data replica), and another having three data replicas.

Disk(non PCI) with disk-by-guid reference (Best Practice)

Device File

aio:///dev/disk/by-id/ OR uring:///dev/disk/by-id/

Asynchronous Disk(AIO)

Device File

/dev/sdx

Asynchronous Disk I/O (AIO)

Device File

aio:///dev/sdx

io_uring

Device File

uring:///dev/sdx

DEVNAME DEVTYPE SIZE AVAILABLE MODEL DEVPATH MAJOR MINOR DEVLINKS

/dev/nvme0n1 disk 894.3GiB yes Dell DC NVMe PE8010 RI U.2 960GB /devices/pci0000:30/0000:30:02.0/0000:31:00.0/nvme/nvme0/nvme0n1 259 0 "/dev/disk/by-id/nvme-eui.ace42e00164f0290", "/dev/disk/by-path/pci-0000:31:00.0-nvme-1", "/dev/disk/by-dname/nvme0n1", "/dev/disk/by-id/nvme-Dell_DC_NVMe_PE8010_RI_U.2_960GB_SDA9N7266I110A814"cat <<EOF | kubectl create -f -

apiVersion: "openebs.io/v1beta1"

kind: DiskPool

metadata:

name: pool-on-node-1

namespace: mayastor

spec:

node: workernode-1-hostname

disks: ["/dev/disk/by-id/<id>"]

EOFapiVersion: "openebs.io/v1beta1"

kind: DiskPool

metadata:

name: INSERT_POOL_NAME_HERE

namespace: mayastor

spec:

node: INSERT_WORKERNODE_HOSTNAME_HERE

disks: ["INSERT_DEVICE_URI_HERE"]kubectl get dsp -n mayastorNAME NODE STATE POOL_STATUS CAPACITY USED AVAILABLE

pool-on-node-1 node-1-14944 Created Online 10724835328 0 10724835328

pool-on-node-2 node-2-14944 Created Online 10724835328 0 10724835328

pool-on-node-3 node-3-14944 Created Online 10724835328 0 10724835328cat <<EOF | kubectl create -f -

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: mayastor-1

parameters:

ioTimeout: "30"

protocol: nvmf

repl: "1"

provisioner: io.openebs.csi-mayastor

EOFcat <<EOF | kubectl create -f -

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: mayastor-3

parameters:

ioTimeout: "30"

protocol: nvmf

repl: "3"

provisioner: io.openebs.csi-mayastor

EOFThis website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The supportability tool collects Mayastor specific information from the cluster using the command-line tool. It uses the dump command, which interacts with the Mayastor services to build an archive (ZIP) file that acts as a placeholder for the bundled information.

To bundle Mayastor's complete system information, execute:

To view all the available options and sub-commands that can be used with the dump command, execute:

The archive files generated by the dump command are stored in the specified output directories. The tables below specify the path and the content that will be stored in each archive file.

The supportability tool generates support bundles, which are used for debugging purposes. These bundles are created in response to the user's invocation of the tool and can be transmitted only by the user. Below is the information collected by the supportability tool that might be identified as 'sensitive' based on the organization's data protection/privacy commitments and security policies. Logs: The default installation of Mayastor includes the deployment of a log aggregation subsystem based on Grafana Loki. All the pods deployed in the same namespace as Mayastor and labelled with openebs.io/logging=true will have their logs incorporated within this centralized collector. These logs may include the following information:

Kubernetes (K8s) node hostnames

IP addresses

container addresses

API endpoints

K8s Events: The archive files generated by the supportability tool contain information on all the events of the Kubernetes cluster present in the same namespace as Mayastor.

etcd Dump: The default installation of Mayastor deploys an etcd instance for its exclusive use. This key-value pair is used to persist state information for Mayastor-managed objects. These key-value pairs are required for diagnostic and troubleshooting purposes. The etcd dump archive file consists of the following information:

Kubernetes node hostnames

IP addresses

PVC/PV names

Container names

./topology/volume

volume

volume-01

volume-01-topology.json

Topology information of volume-01 (All volume topologies will available here)

./logs/csi-controller

-

csi-controller

loki-csi-controller.log

./logs/csi-controller

-

csi-provisioner

loki-csi-provisioner.log

./logs/diskpool-operator

-

operator-diskpool

loki-operator-disk-pool.log

./logs/mayastor

node-02

csi-driver-registrar

node-02-loki-csi-driver-registrar.log

./logs/mayastor

node-01

csi-node

node-01-loki-csi-node.log

./logs/mayastor

node-01

io-engine

node-01-loki-mayastor.log

./logs/blot

node-02

io-engine

node-02-loki-mayastor.log

./logs/etcd

node-03

etcd

node-03-loki-etcd.log

./k8s_resources/configurations/

etcd (Statefullset)

mayastor-etcd.yaml

./k8s_resources/configurations/

loki (Statefullset)

mayastor-loki-yaml

./k8s_resources/configurations/

operator-diskpool

mayastor-operator-disk-pool.yaml

./k8s_resources/configurations/

promtail(Daemonset)

mayastor-promtail.yaml

./k8s_resources/configurations/

io-engine (Daemonset)

io-engine.yaml

./k8s_resources/configurations/

disk_pools

k8s_diskPools.yaml

./k8s_resources

events

k8s_events.yaml

./k8s_resources/configurations/

all pods(deployed under the same namespace as Mayastor)

pods.yaml

./k8s_resources

volume snapshot classes

volume_snapshot_classes.yaml

./k8s_resources

volume snapshot contents

volume_snapshot_contents.yaml

Mayastor

K8s

Container names

K8s Persistent Volume names (provisioned by Mayastor)

DiskPool names

Block device details (except the content) K8s Definition Files: The support bundle includes definition files for all the Mayastor components. Some of these are listed below:

Deployments

DaemonSets

StatefulSets

VolumeSnapshotClass

VolumeSnapshotContent

Mayastor

User applications within the mayastor namespace

Block device details (except data content)

./topology/node

node

node-01

node-01-topology.json

Topology of node-01(All node topologies will available here)

./topology/pool

pool

pool-01

pool-01-topology.json

Topology of pool-01 (All pool topologies will available here)

./logs/core-agents

-

agent-core

loki-agent-core.log

./logs/rest

-

api-rest

loki-api-rest.log

./logs/csi-controller

-

csi-attacher

./k8s_resources/configurations/

agent-core (Deployment)

mayastor-agent-core.yaml

./k8s_resources/configurations/

api-rest

mayastor-api-rest.yaml

./k8s_resources/configurations/

si-controller (Deployment)

mayastor-csi-controller.yaml

./k8s_resources/configurations/

csi-node(Daemonset)

./

etcd

etcd_dump

./

Support-tool

support_tool_logs.log

kubectl mayastor dump --help`Dump` resources

Usage: kubectl-mayastor dump [OPTIONS] <COMMAND>

Commands:

system Collects entire system information

etcd Collects information from etcd

help Print this message or the help for the given subcommand(s)

Options:

-r, --rest <REST>

The rest endpoint to connect to

-t, --timeout <TIMEOUT>

Specifies the timeout value to interact with other modules of system [default: 10s]

-k, --kube-config-path <KUBE_CONFIG_PATH>

Path to kubeconfig file

-s, --since <SINCE>

Period states to collect all logs from last specified duration [default: 24h]

-l, --loki-endpoint <LOKI_ENDPOINT>

LOKI endpoint, if left empty then it will try to parse endpoint from Loki service(K8s service resource), if the tool is unable to parse from service then logs will be collected using Kube-apiserver

-e, --etcd-endpoint <ETCD_ENDPOINT>

Endpoint of ETCD service, if left empty then will be parsed from the internal service name

-d, --output-directory-path <OUTPUT_DIRECTORY_PATH>

Output directory path to store archive file [default: ./]

-n, --namespace <NAMESPACE>

Kubernetes namespace of mayastor service [default: mayastor]

-o, --output <OUTPUT>

The Output, viz yaml, json [default: none]

-j, --jaeger <JAEGER>

Trace rest requests to the Jaeger endpoint agent

-h, --help

Print help

Supportability - collects state & log information of services and dumps it to a tar file. loki-csi-attacher.log

mayastor-csi-node.yaml

kubectl mayastor dump system -n mayastor -d <output_directory_path>This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

By default, Mayastor collects basic information related to the number and scale of user-deployed instances. The collected data is anonymous and is encrypted at rest. This data is used to understand storage usage trends, which in turn helps maintainers prioritize their contributions to maximize the benefit to the community as a whole.

A summary of the information collected is given below:

The collected information is stored on behalf of the OpenEBS project by DataCore Software Inc. in data centers located in Texas, USA.

To disable collection of usage data or generation of events, the following Helm command, along with the flag, can either be executed during installation or can be re-executed post-installation.

To disable the collection of data metrics from the cluster, add the following flag to the Helm install command.

When eventing is enabled, NATS pods are created to gather various events from the cluster, including statistical metrics such as pools created. To deactivate eventing within the cluster, include the following flag in the Helm installation command.

Cluster information

K8s cluster ID: This is a SHA-256 hashed value of the UID of your Kubernetes cluster's kube-system namespace.

K8s node count: This is the number of nodes in your Kubernetes cluster.

Product name: This field displays the name Mayastor

Product version: This is the deployed version of Mayastor.

Deploy namespace: This is a SHA-256 hashed value of the name of the Kubernetes namespace where Mayastor Helm chart is deployed.

Storage node count: This is the number of nodes on which the Mayastor I/O engine is scheduled.

Pool information

Pool count: This is the number of Mayastor DiskPools in your cluster.

Pool maximum size: This is the capacity of the Mayastor DiskPool with the highest capacity.

Pool minimum size: This is the capacity of the Mayastor DiskPool with the lowest capacity.

Pool mean size: This is the average capacity of the Mayastor DiskPools in your cluster.

Pool capacity percentiles: This calculates and returns the capacity distribution of Mayastor DiskPools for the 50th, 75th and the 90th percentiles.

Pools created: This is the number of successful pool creation attempts.

Pools deleted: This is the number of successful pool deletion attempts.

Volume information

Volume count: This is the number of Mayastor Volumes in your cluster.

Volume minimum size: This is the capacity of the Mayastor Volume with the lowest capacity.

Volume mean size: This is the average capacity of the Mayastor Volumes in your cluster.

Volume capacity percentiles: This calculates and returns the capacity distribution of Mayastor Volumes for the 50th, 75th and the 90th percentiles.

Volumes created: This is the number of successful volume creation attempts.

Volumes deleted: This is the number of successful volume deletion attempts.

Replica Information

Replica count: This is the number of Mayastor Volume replicas in your cluster.

Average replica count per volume: This is the average number of replicas each Mayastor Volume has in your cluster.

--set obs.callhome.enabled=false--set eventing.enabled=falseThis website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

v1.25.10

v1.23.7

v1.22.10

v1.21.13

Linux

Distribution:Ubuntu Version: 20.04 LTS Kernel version: 5.13.0-27-generic

Windows

Not supported

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The objective of this section is to provide the user and evaluator of Mayastor with a topological view of the gross anatomy of a Mayastor deployment. A description will be made of the expected pod inventory of a correctly deployed cluster, the roles and functions of the constituent pods and related Kubernetes resource types, and of the high level interactions between them and the orchestration thereof.

More detailed guides to Mayastor's components, their design and internal structure, and instructions for building Mayastor from source, are maintained within the project's GitHub repository.

The io-engine pod encapsulates Mayastor containers, which implement the I/O path from the block devices at the persistence layer, up to the relevant initiators on the worker nodes mounting volume claims. The Mayastor process running inside this container performs four major functions:

Creates and manages DiskPools hosted on that node.

Creates, exports, and manages volume controller objects hosted on that node.

Creates and exposes replicas from DiskPools hosted on that node over NVMe-TCP.

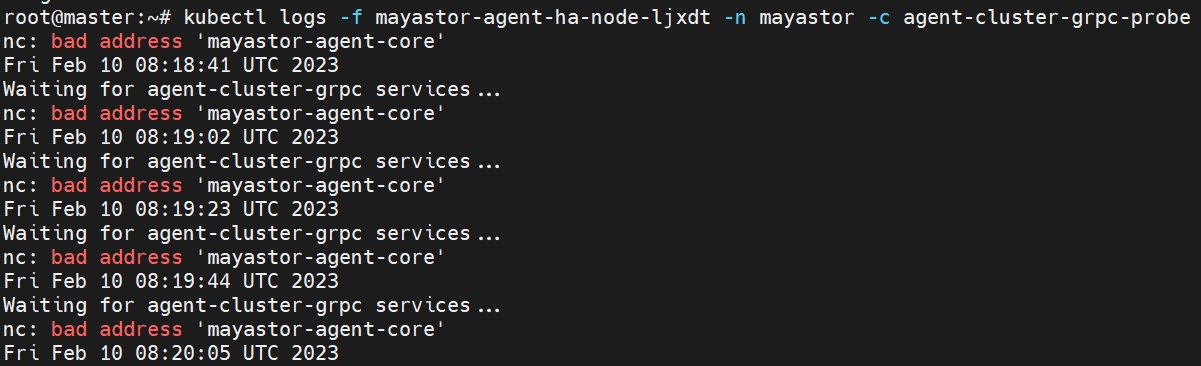

Provides a gRPC interface service to orchestrate the creation, deletion and management of the above objects, hosted on that node. Before the io-engine pod starts running, an init container attempts to verify connectivity to the agent-core in the namespace where Mayastor has been deployed. If a connection is established, the io-engine pod registers itself over gRPC to the agent-core. In this way, the agent-core maintains a registry of nodes and their supported api-versions. The scheduling of these pods is determined declaratively by using a DaemonSet specification. By default, a nodeSelector field is used within the pod spec to select all worker nodes to which the user has attached the label

The csi-node pods within a cluster implement the node plugin component of Mayastor's CSI driver. As such, their function is to orchestrate the mounting of Mayastor-provisioned volumes on worker nodes on which application pods consuming those volumes are scheduled. By default, a csi-node pod is scheduled on every node in the target cluster, as determined by a DaemonSet resource of the same name. Each of these pods encapsulates two containers, csi-node, and csi-driver-registrar. The node plugin does not need to run on every worker node within a cluster and this behavior can be modified, if desired, through the application of appropriate node labeling and the addition of a corresponding nodeSelector entry within the pod spec of the csi-node DaemonSet. However, it should be noted that if a node does not host a plugin pod, then it will not be possible to schedule an application pod on it, which is configured to mount Mayastor volumes.

etcd is a distributed reliable key-value store for the critical data of a distributed system. Mayastor uses etcd as a reliable persistent store for its configuration and state data.

The supportability tool is used to create support bundles (archive files) by interacting with multiple services present in the cluster where Mayastor is installed. These bundles contain information about Mayastor resources like volumes, pools and nodes, and can be used for debugging. The tool can collect the following information:

Topological information of Mayastor's resource(s) by interacting with the REST service

Historical logs by interacting with Loki. If Loki is unavailable, it interacts with the kube-apiserver to fetch logs.

Mayastor-specific Kubernetes resources by interacting with the kube-apiserver

Mayastor-specific information from etcd (internal) by interacting with the etcd server.

aggregates and centrally stores logs from all Mayastor containers which are deployed in the cluster.

is a log collector built specifically for Loki. It uses the configuration file for target discovery and includes analogous features for labeling, transforming, and filtering logs from containers before ingesting them to Loki.

api-rest

Pod

Hosts the public API REST server

Single

api-rest

Service

Exposes the REST API server

operator-diskpool

Deployment

Hosts DiskPool operator

Single

csi-node

DaemonSet

Hosts CSI Driver node plugin containers

All worker nodes

etcd

StatefulSet

Hosts etcd Server container

Configurable(Recommended: Three replicas)

etcd

Service

Exposes etcd DB endpoint

Single

etcd-headless

Service

Exposes etcd DB endpoint

Single

io-engine

DaemonSet

Hosts Mayastor I/O engine

User-selected nodes

DiskPool

CRD

Declares a Mayastor pool's desired state and reflects its current state

User-defined, one or many

Additional components

metrics-exporter-pool

Sidecar container (within io-engine DaemonSet)

Exports pool related metrics in Prometheus format

All worker nodes

pool-metrics-exporter

Service

Exposes exporter API endpoint to Prometheus

Single

promtail

DaemonSet

Scrapes logs of Mayastor-specific pods and exports them to Loki

All worker nodes

loki

StatefulSet

Stores the historical logs exported by promtail pods

Single

loki

Service

Exposes the Loki API endpoint via ClusterIP

Single

openebs.io/engine=mayastorControl Plane

agent-core

Deployment

Principle control plane actor

Single

csi-controller

Deployment

Hosts Mayastor's CSI controller implementation and CSI provisioner side car

Single

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

This quickstart guide describes the actions necessary to perform a basic installation of Mayastor on an existing Kubernetes cluster, sufficient for evaluation purposes. It assumes that the target cluster will pull the Mayastor container images directly from OpenEBS public container repositories. Where preferred, it is also possible to build Mayastor locally from source and deploy the resultant images but this is outside of the scope of this guide.

Deploying and operating Mayastor in production contexts requires a foundational knowledge of Mayastor internals and best practices, found elsewhere within this documentation.

2MiB-sized Huge Pages must be supported and enabled on the mayastor storage nodes. A minimum number of 1024 such pages (i.e. 2GiB total) must be available exclusively to the Mayastor pod on each node, which should be verified thus:

If fewer than 1024 pages are available then the page count should be reconfigured on the worker node as required, accounting for any other workloads which may be scheduled on the same node and which also require them. For example:

This change should also be made persistent across reboots by adding the required value to the file/etc/sysctl.conf like so:

If you modify the Huge Page configuration of a node, you MUST either restart kubelet or reboot the node. Mayastor will not deploy correctly if the available Huge Page count as reported by the node's kubelet instance does not satisfy the minimum requirements.

All worker nodes which will have Mayastor pods running on them must be labelled with the OpenEBS engine type "mayastor". This label will be used as a node selector by the Mayastor Daemonset, which is deployed as a part of the Mayastor data plane components installation. To add this label to a node, execute:

grep HugePages /proc/meminfo

AnonHugePages: 0 kB

ShmemHugePages: 0 kB

HugePages_Total: 1024

HugePages_Free: 671

HugePages_Rsvd: 0

HugePages_Surp: 0

echo 1024 | sudo tee /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepagesecho vm.nr_hugepages = 1024 | sudo tee -a /etc/sysctl.confkubectl label node <node_name> openebs.io/engine=mayastorThis website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

All worker nodes must satisfy the following requirements:

x86-64 CPU cores with SSE4.2 instruction support

(Tested on) Linux kernel 5.15 (Recommended) Linux kernel 5.13 or higher. The kernel should have the following modules loaded:

nvme-tcp

Ensure that the following ports are not in use on the node:

10124: Mayastor gRPC server will use this port.

8420 / 4421: NVMf targets will use these ports.

Disks must be unpartitioned, unformatted, and used exclusively by the DiskPool.

The minimum capacity of the disks should be 10 GB.

Kubernetes core v1 API-group resources: Pod, Event, Node, Namespace, ServiceAccount, PersistentVolume, PersistentVolumeClaim, ConfigMap, Secret, Service, Endpoint, Event.

Kubernetes batch API-group resources: CronJob, Job

Kubernetes apps API-group resources: Deployment, ReplicaSet, StatefulSet, DaemonSet

Kubernetes

The minimum supported worker node count is three nodes. When using the synchronous replication feature (N-way mirroring), the number of worker nodes to which Mayastor is deployed should be no less than the desired replication factor.

Mayastor supports the export and mounting of volumes over NVMe-oF TCP only. Worker node(s) on which a volume may be scheduled (to be mounted) must have the requisite initiator support installed and configured. In order to reliably mount Mayastor volumes over NVMe-oF TCP, a worker node's kernel version must be 5.13 or later and the nvme-tcp kernel module must be loaded.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The steps and commands which follow are intended only for use in conjunction with Mayastor version(s) 2.1.x and above.

Add the OpenEBS Mayastor Helm repository.

Run the following command to discover all the stable versions of the added chart repository:

Run the following command to install Mayastor _version 2.5.

Verify the status of the pods by running the command:

helm repo add mayastor https://openebs.github.io/mayastor-extensions/ "mayastor" has been added to your repositorieshelm search repo mayastor --versions NAME CHART VERSION APP VERSION DESCRIPTION

mayastor/mayastor 2.5.0 2.5.0 Mayastor Helm chart for Kuberneteshelm install mayastor mayastor/mayastor -n mayastor --create-namespace --version 2.5.0NAME: mayastor

LAST DEPLOYED: Thu Sep 22 18:59:56 2022

NAMESPACE: mayastor

STATUS: deployed

REVISION: 1

NOTES:

OpenEBS Mayastor has been installed. Check its status by running:

$ kubectl get pods -n mayastor

For more information or to view the documentation, visit our website at https://openebs.io.kubectl get pods -n mayastorNAME READY STATUS RESTARTS AGE

mayastor-agent-core-6c485944f5-c65q6 2/2 Running 0 2m13s

mayastor-agent-ha-node-42tnm 1/1 Running 0 2m14s

mayastor-agent-ha-node-45srp 1/1 Running 0 2m14s

mayastor-agent-ha-node-tzz9x 1/1 Running 0 2m14s

mayastor-api-rest-5c79485686-7qg5p 1/1 Running 0 2m13s

mayastor-csi-controller-65d6bc946-ldnfb 3/3 Running 0 2m13s

mayastor-csi-node-f4fgd 2/2 Running 0 2m13s

mayastor-csi-node-ls9m4 2/2 Running 0 2m13s

mayastor-csi-node-xtcfc 2/2 Running 0 2m13s

mayastor-etcd-0 1/1 Running 0 2m13s

mayastor-etcd-1 1/1 Running 0 2m13s

mayastor-etcd-2 1/1 Running 0 2m13s

mayastor-io-engine-f2wm6 2/2 Running 0 2m13s

mayastor-io-engine-kqxs9 2/2 Running 0 2m13s

mayastor-io-engine-m44ms 2/2 Running 0 2m13s

mayastor-loki-0 1/1 Running 0 2m13s

mayastor-obs-callhome-5f47c6d78b-fzzd7 1/1 Running 0 2m13s

mayastor-operator-diskpool-b64b9b7bb-vrjl6 1/1 Running 0 2m13s

mayastor-promtail-cglxr 1/1 Running 0 2m14s

mayastor-promtail-jc2mz 1/1 Running 0 2m14s

mayastor-promtail-mr8nf 1/1 Running 0 2m14sext4 and optionally xfs

Helm version must be v3.7 or later.

Each worker node which will host an instance of an io-engine pod must have the following resources free and available for exclusive use by that pod:

Two CPU cores

1GiB RAM

HugePage support

A minimum of 2GiB of 2MiB-sized pages

storage.k8s.ioKubernetes apiextensions.k8s.io API-group resources: CustomResourceDefinition

Mayastor Custom Resources that is openebs.io API-group resources: DiskPool

Custom Resources from Helm chart dependencies of Jaeger that is helpful for debugging:

ConsoleLink Resource from console.openshift.io API group

ElasticSearch Resource from logging.openshift.io API group

Kafka and KafkaUsers from kafka.strimzi.io API group

ServiceMonitor from monitoring.coreos.com API group

Ingress from networking.k8s.io API group and from extensions API group

Route from route.openshift.io API group

All resources from jaegertracing.io API group

resources:

limits:

cpu: "2"

memory: "1Gi"

hugepages-2Mi: "2Gi"

requests:

cpu: "2"

memory: "1Gi"

hugepages-2Mi: "2Gi"resources:

limits:

cpu: "100m"

memory: "50Mi"

requests:

cpu: "100m"

memory: "50Mi"resources:

limits:

cpu: "32m"

memory: "128Mi"

requests:

cpu: "16m"

memory: "64Mi"resources:

limits:

cpu: "100m"

memory: "64Mi"

requests:

cpu: "50m"

memory: "32Mi"resources:

limits:

cpu: "1000m"

memory: "32Mi"

requests:

cpu: "500m"

memory: "16Mi"resources:

limits:

cpu: "100m"

memory: "32Mi"

requests:

cpu: "50m"

memory: "16Mi"---

apiVersion: v1

kind: ServiceAccount

metadata:

name: {{ .Release.Name }}-service-account

namespace: {{ .Release.Namespace }}

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: mayastor-cluster-role

rules:

- apiGroups: ["apiextensions.k8s.io"]

resources: ["customresourcedefinitions"]

verbs: ["create", "list"]

# must read diskpool info

- apiGroups: ["datacore.com"]

resources: ["diskpools"]

verbs: ["get", "list", "watch", "update", "replace", "patch"]

# must update diskpool status

- apiGroups: ["datacore.com"]

resources: ["diskpools/status"]

verbs: ["update", "patch"]

# external provisioner & attacher

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "update", "create", "delete", "patch"]

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

# external provisioner

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list", "watch", "create", "update", "patch"]

- apiGroups: ["snapshot.storage.k8s.io"]

resources: ["volumesnapshots"]

verbs: ["get", "list"]

- apiGroups: ["snapshot.storage.k8s.io"]

resources: ["volumesnapshotcontents"]

verbs: ["get", "list"]

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

# external attacher

- apiGroups: ["storage.k8s.io"]

resources: ["volumeattachments"]

verbs: ["get", "list", "watch", "update", "patch"]

- apiGroups: ["storage.k8s.io"]

resources: ["volumeattachments/status"]

verbs: ["patch"]

# CSI nodes must be listed

- apiGroups: ["storage.k8s.io"]

resources: ["csinodes"]

verbs: ["get", "list", "watch"]

# get kube-system namespace to retrieve Uid

- apiGroups: [""]

resources: ["namespaces"]

verbs: ["get"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: mayastor-cluster-role-binding

subjects:

- kind: ServiceAccount

name: {{ .Release.Name }}-service-account

namespace: {{ .Release.Namespace }}

roleRef:

kind: ClusterRole

name: mayastor-cluster-role

apiGroup: rbac.authorization.k8s.ioThis website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

With the previous versions, the control plane ensured replica redundancy by monitoring all volume targets and checking for any volume targets that were in Degraded state, indicating that one or more replicas of that volume targets were faulty. When a matching volume targets is found, the faulty replica is removed. Then, a new replica is created and added to the volume targets object. As part of adding the new child data-plane, a full rebuild was initiated from one of the existing Online

Mayastor is a performance optimised "Container Attached Storage" (CAS) solution of the CNCF project OpenEBS. The goal of OpenEBS is to extend Kubernetes with a declarative data plane, providing flexible persistent storage for stateful applications.

Design goals for Mayastor include:

Highly available, durable persistence of data

To be readily deployable and easily managed by autonomous SRE or development teams

To be a low-overhead abstraction for NVMe-based storage devices

Mayastor incorporates Intel's Storage Performance Development Kit. It has been designed from the ground up to leverage the protocol and compute efficiency of NVMe-oF semantics, and the performance capabilities of the latest generation of solid-state storage devices, in order to deliver a storage abstraction with performance overhead measured to be within the range of single-digit percentages.

By comparison, most "shared everything" storage systems are widely thought to impart an overhead of at least 40% (and sometimes as much as 80% or more) as compared to the capabilities of the underlying devices or cloud volumes; additionally traditional shared storage scales in an unpredictable manner as I/O from many workloads interact and compete for resources.

While Mayastor utilizes NVMe-oF it does not require NVMe devices or cloud volumes to operate and can work well with other device types.

Mayastor's source code and documentation are distributed amongst a number of GitHub repositories under the OpenEBS organisation. The following list describes some of the main repositories but is not exhaustive.

openebs/mayastor : contains the source code of the data plane components

openebs/mayastor-control-plane : contains the source code of the control plane components

openebs/mayastor-api : contains common protocol buffer definitions and OpenAPI specifications for Mayastor components

: contains dependencies common to the control and data plane repositories

: contains components and utilities that provide extended functionalities like ease of installation, monitoring and observability aspects

: contains Mayastor's user documenation

The partial rebuild feature, overcomes the above problem and helps in achieving faster rebuild times. When volume target encounters IO error on a child/replica, it marks the child as Faulted (removing it from the I/O path) and begins to maintain a write log for all subsequent writes. The Core agent starts a default 10 minute wait for the replica to come back. If the child's replica is online again within timeout, the control-plane requests the volume target to online the child and add it to the IO path along with a partial rebuild process using the aforementioned write log.

The data-plane handles both full and partial replica rebuilds. To view history of the rebuilds that an existing volume target has undergone during its lifecycle until now, you can use the given kubectl command.

To get the output in table format:

kubectl mayastor get rebuild-history {your_volume_UUID} DST SRC STATE TOTAL RECOVERED TRANSFERRED IS-PARTIAL START-TIME END-TIME

b5de71a6-055d-433a-a1c5-2b39ade05d86 0dafa450-7a19-4e21-a919-89c6f9bd2a97 Completed 7MiB 7MiB 0 B true 2023-07-04T05:45:47Z 2023-07-04T05:45:47Z

b5de71a6-055d-433a-a1c5-2b39ade05d86 0dafa450-7a19-4e21-a919-89c6f9bd2a97 Completed 7MiB 7MiB 0 B true 2023-07-04T05:45:46Z 2023-07-04T05:45:46ZTo get the output in JSON format:

kubectl mayastor get rebuild-history {your_volume_UUID} -ojson{

"targetUuid": "c9eb4172-e90c-40ca-9db0-26b2ae372b28",

"records": [

{

"childUri": "nvmf://10.1.0.9:8420/nqn.2019-05.io.openebs:b5de71a6-055d-433a-a1c5-2b39ade05d86?uuid=b5de71a6-055d-433a-a1c5-2b39ade05d86",

"srcUri": "bdev:///0dafa450-7a19-4e21-a919-89c6f9bd2a97?uuid=0dafa450-7a19-4e21-a919-89c6f9bd2a97",

"rebuildJobState": "Completed",

"blocksTotal": 14302,

"blocksRecovered": 14302,

"blocksTransferred": 0,

"blocksRemaining": 0,

"blockSize": 512,

"isPartial": true,

"startTime": "2023-07-04T05:45:47.765932276Z",

"endTime": "2023-07-04T05:45:47.766825274Z"

},

{

For example: kubectl mayastor get rebuild-history e898106d-e735-4edf-aba2-932d42c3c58d -ojson

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The Mayastor kubectl plugin can be used to view and manage Mayastor resources such as nodes, pools and volumes. It is also used for operations such as scaling the replica count of volumes.

The Mayastor kubectl plugin is available for the Linux platform. The binary for the plugin can be found .

Add the downloaded Mayastor kubectl plugin under $PATH.

To verify the installation, execute:

Sample command to use kubectl plugin:

You can use the plugin with the following options:

All the above resource information can be retrieved for a particular resource using its ID. The command to do so is as follows: kubectl mayastor get <resource_name> <resource_id>

Table is the default output format.

The plugin requires access to the Mayastor REST server for execution. It gets the master node IP from the kube-config file. In case of any failure, the REST endpoint can be specified using the ‘–rest’ flag.

The plugin currently does not have authentication support.

The plugin can operate only over HTTP.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Storage class resource in Kubernetes is used to supply parameters to volumes when they are created. It is a convenient way of grouping volumes with common characteristics. All parameters take a string value. Brief explanation of each supported Mayastor parameter follows.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Cordoning a node marks or taints the node as unschedulable. This prevents the scheduler from deploying new resources on that node. However, the resources that were deployed prior to cordoning off the node will remain intact.

This feature is in line with the node-cordon functionality of Kubernetes.

To add a label and cordon a node, execute:

The storage class parameter local has been deprecated and is a breaking change in Mayastor version 2.0. Ensure that this parameter is not used.

File system that will be used when mounting the volume. The supported file systems are ext4, xfs and btrfs and the default file system when not specified is ext4. We recommend to use xfs that is considered to be more advanced and performant. Please ensure the requested filesystem driver is installed on all worker nodes in the cluster before using it.

The parameter 'protocol' takes the value nvmf(NVMe over TCP protocol). It is used to mount the volume (target) on the application node.

The string value should be a number and the number should be greater than zero. Mayastor control plane will try to keep always this many copies of the data if possible. If set to one then the volume does not tolerate any node failure. If set to two, then it tolerates one node failure. If set to three, then two node failures, etc.

The volumes can either be thick or thin provisioned. Adding the thin parameter to the StorageClass YAML allows the volume to be thinly provisioned. To do so, add thin: true under the parameters spec, in the StorageClass YAML. Sample YAML When the volumes are thinly provisioned, the user needs to monitor the pools, and if these pools start to run out of space, then either new pools must be added or volumes deleted to prevent thinly provisioned volumes from getting degraded or faulted. This is because when a pool with more than one replica runs out of space, Mayastor moves the largest out-of-space replica to another pool and then executes a rebuild. It then checks if all the replicas have sufficient space; if not, it moves the next largest replica to another pool, and this process continues till all the replicas have sufficient space.

The agents.core.capacity.thin spec present in the Mayastor helm chart consists of the following configurable parameters that can be used to control the scheduling of thinly provisioned replicas:

poolCommitment parameter specifies the maximum allowed pool commitment limit (in percent).

volumeCommitment parameter specifies the minimum amount of free space that must be present in each replica pool in order to create new replicas for an existing volume. This value is specified as a percentage of the volume size.

volumeCommitmentInitial minimum amount of free space that must be present in each replica pool in order to create new replicas for a new volume. This value is specified as a percentage of the volume size.

stsAffinityGroup represents a collection of volumes that belong to instances of Kubernetes StatefulSet. When a StatefulSet is deployed, each instance within the StatefulSet creates its own individual volume, which collectively forms the stsAffinityGroup. Each volume within the stsAffinityGroup corresponds to a pod of the StatefulSet.

This feature enforces the following rules to ensure the proper placement and distribution of replicas and targets so that there isn't any single point of failure affecting multiple instances of StatefulSet.

Anti-Affinity among single-replica volumes : This rule ensures that replicas of different volumes are distributed in such a way that there is no single point of failure. By avoiding the colocation of replicas from different volumes on the same node.

Anti-Affinity among multi-replica volumes :

If the affinity group volumes have multiple replicas, they already have some level of redundancy. This feature ensures that in such cases, the replicas are distributed optimally for the stsAffinityGroup volumes.

Anti-affinity among targets :

The High Availability feature ensures that there is no single point of failure for the targets. The stsAffinityGroup ensures that in such cases, the targets are distributed optimally for the stsAffinityGroup volumes.

By default, the stsAffinityGroup feature is disabled. To enable it, modify the storage class YAML by setting the parameters.stsAffinityGroup parameter to true.

cloneFsIdAsVolumeId is a setting for volume clones/restores with two options: true and false. By default, it is set to false.

When set to true, the created clone/restore's filesystem uuid will be set to the restore volume's uuid. This is important because some file systems, like XFS, do not allow duplicate filesystem uuid on the same machine by default.

When set to false, the created clone/restore's filesystem uuid will be same as the orignal volume uuid, but it will be mounted using the nouuid flag to bypass duplicate uuid validation.

kubectl mayastor -Vkubectl-plugin 1.0.0To view the labels associated with a cordoned node, execute:

In order to make a node schedulable again, execute:

kubectl-mayastor cordon node <node_name> <label>kubectl-mayastor get cordon nodeskubectl-mayastor get cordon node <node_name>USAGE:

kubectl-mayastor [OPTIONS] <SUBCOMMAND>

OPTIONS:

-h, --help

Print help information

-j, --jaeger <JAEGER>

Trace rest requests to the Jaeger endpoint agent

-k, --kube-config-path <KUBE_CONFIG_PATH>

Path to kubeconfig file

-n, --namespace <NAMESPACE>

Kubernetes namespace of mayastor service, defaults to mayastor [default: mayastor]

-o, --output <OUTPUT>

The Output, viz yaml, json [default: none]

-r, --rest <REST>

The rest endpoint to connect to

-t, --timeout <TIMEOUT>

Timeout for the REST operations [default: 10s]

-V, --version

Print version information

SUBCOMMANDS:

cordon 'Cordon' resources

drain 'Drain' resources

dump `Dump` resources

get 'Get' resources

help Print this message or the help of the given subcommand(s)

scale 'Scale' resources

uncordon 'Uncordon' resourceskubectl mayastor get volumesID REPLICAS TARGET-NODE ACCESSIBILITY STATUS SIZE

18e30e83-b106-4e0d-9fb6-2b04e761e18a 4 mayastor-1 nvmf Online 10485761

0c08667c-8b59-4d11-9192-b54e27e0ce0f 4 mayastor-2 <none> Online 10485761kubectl mayastor get poolsID TOTAL CAPACITY USED CAPACITY DISKS NODE STATUS MANAGED

mayastor-pool-1 5360320512 1111490560 aio:///dev/vdb?uuid=d8a36b4b-0435-4fee-bf76-f2aef980b833 kworker1 Online true

mayastor-pool-2 5360320512 2172649472 aio:///dev/vdc?uuid=bb12ec7d-8fc3-4644-82cd-dee5b63fc8c5 kworker1 Online true

mayastor-pool-3 5360320512 3258974208 aio:///dev/vdb?uuid=f324edb7-1aca-41ec-954a-9614527f77e1 kworker2 Online false

kubectl mayastor get nodes

ID GRPC ENDPOINT STATUS

mayastor-2 10.1.0.7:10124 Online

mayastor-1 10.1.0.6:10124 Online

mayastor-3 10.1.0.8:10124 Onlinekubectl mayastor scale volume <volume_id> <size>Volume 0c08667c-8b59-4d11-9192-b54e27e0ce0f Scaled Successfully 🚀kubectl mayastor -ojson get <resource_type>[{"spec":{"num_replicas":2,"size":67108864,"status":"Created","target":{"node":"ksnode-2","protocol":"nvmf"},"uuid":"5703e66a-e5e5-4c84-9dbe-e5a9a5c805db","topology":{"explicit":{"allowed_nodes":["ksnode-1","ksnode-3","ksnode-2"],"preferred_nodes":["ksnode-2","ksnode-3","ksnode-1"]}},"policy":{"self_heal":true}},"state":{"target":{"children":[{"state":"Online","uri":"bdev:///ac02cf9e-8f25-45f0-ab51-d2e80bd462f1?uuid=ac02cf9e-8f25-45f0-ab51-d2e80bd462f1"},{"state":"Online","uri":"nvmf://192.168.122.6:8420/nqn.2019-05.io.openebs:7b0519cb-8864-4017-85b6-edd45f6172d8?uuid=7b0519cb-8864-4017-85b6-edd45f6172d8"}],"deviceUri":"nvmf://192.168.122.234:8420/nqn.2019-05.io.openebs:nexus-140a1eb1-62b5-43c1-acef-9cc9ebb29425","node":"ksnode-2","rebuilds":0,"protocol":"nvmf","size":67108864,"state":"Online","uuid":"140a1eb1-62b5-43c1-acef-9cc9ebb29425"},"size":67108864,"status":"Online","uuid":"5703e66a-e5e5-4c84-9dbe-e5a9a5c805db"}}]kubectl mayastor get volume-replica-topology <volume_id> ID NODE POOL STATUS CAPACITY ALLOCATED SNAPSHOTS CHILD-STATUS REASON REBUILD

a34dbaf4-e81a-4091-b3f8-f425e5f3689b io-engine-1 pool-1 Online 12MiB 0 B 12MiB <none> <none> <none> kubectl mayastor get volume-snapshots ID TIMESTAMP SOURCE-SIZE ALLOCATED-SIZE TOTAL-ALLOCATED-SIZE SOURCE-VOL

25823425-41fa-434a-9efd-a356b70b5d7c 2023-07-07T13:20:17Z 10MiB 12MiB 12MiB ec4e66fd-3b33-4439-b504-d49aba53da26 kubectl-mayastor uncordon node <node_name> <label>

</div>

The above command allows the Kubernetes scheduler to deploy resources on the node.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Mayastor 2.0 enhances High Availability (HA) of the volume target with the nexus switch-over feature. In the event of the target failure, the switch-over feature quickly detects the failure and spawns a new nexus to ensure I/O continuity. The HA feature consists of two components: the HA node agent (which runs in each csi- node) and the cluster agent (which runs alongside the agent-core). The HA node agent looks for io-path failures from applications to their corresponding targets. If any such broken path is encountered, the HA node agent informs the cluster agent. The cluster-agent then creates a new target on a different (live) node. Once the target is created, the node-agent establishes a new path between the application and its corresponding target. The HA feature restores the broken path within seconds, ensuring negligible downtime.

The volume's replica count must be higher than 1 for a new target to be established as part of switch-over.

The HA feature is enabled by default; to disable it, pass the parameter --set=agents.ha.enabled=false with the helm install command.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

When a Mayastor volume is provisioned based on a StorageClass which has a replication factor greater than one (set by its repl parameter), the control plane will attempt to maintain through a 'Kubernetes-like' reconciliation loop that number of identical copies of the volume's data "replicas" (a replica is a nexus target "child") at any point in time. When a volume is first provisioned the control plane will attempt to create the required number of replicas, whilst adhering to its internal heuristics for their location within the cluster (which will be discussed shortly). If it succeeds, the volume will become available and will bind with the PVC. If the control plane cannot identify a sufficient number of eligble Mayastor Pools in which to create required replicas at the time of provisioning, the operation will fail; the Mayastor Volume will not be created and the associated PVC will not be bound. Kubernetes will periodically re-try the volume creation and if at any time the appropriate number of pools can be selected, the volume provisioning should succeed.

Once a volume is processing I/O, each of its replicas will also receive I/O. Reads are round-robin distributed across replicas, whilst writes must be written to all. In a real world environment this is attended by the possibility that I/O to one or more replicas might fail at any time. Possible reasons include transient loss of network connectivity, node reboots, node or disk failure. If a volume's nexus (NVMe controller) encounters 'too many' failed I/Os for a replica, then that replica's child status will be marked Faulted and it will no longer receive I/O requests from the nexus. It will remain a member of the volume, whose departure from the desired state with respect to replica count will be reflected with a volume status of Degraded. How many I/O failures are considered "too many" in this context is outside the scope of this discussion.

The control plane will first 'retire' the old, faulted one which will then no longer be associated to the volume. Once retired, a replica will become available for garbage collection (deletion from the Mayastor Pool containing it), assuming that the nature of the failure was such that the pool itself is still viable (i.e. the underlying disk device is still accessible). Then it will attempt to restore the desired state (replica count) by creating a new replica, following its replica placement rules. If it succeeds, the nexus will "rebuild" that new replica - performing a full copy of all data from a healthy replica Online, i.e. the source. This process can proceed whilst the volume continues to process application I/Os although it will contend for disk throughput at both the source and destination disks.

If a nexus is cleanly restarted, i.e. the Mayastor pod hosting it restarts gracefully, with the assistance of the control plane it will 'recompose' itself; all of the previous healthy member replicas will be re-attached to it. If previously faulted replicas are available to be re-connected (Online), then the control plane will attempt to reuse and rebuild them directly, rather than seek replacements for them first. This edge-case therefore does not result in the retirement of the affected replicas; they are simply reused. If the rebuild fails then we follow the above process of removing a Faulted replica and adding a new one. On an unclean restart (i.e. the Mayastor pod hosting the nexus crashes or is forcefully deleted) only one healthy replica will be re-attached and all other replicas will eventually be rebuilt.

Once provisioned, the replica count of a volume can be changed using the kubectl-mayastor plugin scale subcommand. The value of the num_replicas field may be either increased or decreased by one and the control plane will attempt to satisfy the request by creating or destroying a replicas as appropriate, following the same replica placement rules as described herein. If the replica count is reduced, faulted replicas will be selected for removal in preference to healthy ones.

Accurate predictions of the behaviour of Mayastor with respect to replica placement and management of replica faults can be made by reference to these 'rules', which are a simplified representation of the actual logic:

"Rule 1": A volume can only be provisioned if the replica count (and capacity) of its StorageClass can be satisfied at the time of creation

"Rule 2": Every replica of a volume must be placed on a different Mayastor Node)

"Rule 3": Children with the state Faulted are always selected for retirement in preference to those with state Online

N.B.: By application of the 2nd rule, replicas of the same volume cannot exist within different pools on the same Mayastor Node.

A cluster has two Mayastor nodes deployed, "Node-1" and "Node-2". Each Mayastor node hosts two Mayastor pools and currently, no Mayastor volumes have been defined. Node-1 hosts pools "Pool-1-A" and "Pool-1-B", whilst Node-2 hosts "Pool-2-A and "Pool-2-B". When a user creates a PVC from a StorageClass which defines a replica count of 2, the Mayastor control plane will seek to place one replica on each node (it 'follows' Rule 2). Since in this example it can find a suitable candidate pool with sufficient free capacity on each node, the volume is provisioned and becomes "healthy" (Rule 1). Pool-1-A is selected on Node-1, and Pool-2-A selected on Node-2 (all pools being of equal capacity and replica count, in this initial 'clean' state).

Sometime later, the physical disk of Pool-2-A encounters a hardware failure and goes offline. The volume is in use at the time, so its nexus (NVMe controller) starts to receive I/O errors for the replica hosted in that Pool. The associated replica's child from Pool-2-A enters the Faulted state and the volume state becomes Degraded (as seen through the kubectl-mayastor plugin).

Expected Behaviour: The volume will maintain read/write access for the application via the remaining healthy replica. The faulty replica from Pool-2-A will be removed from the Nexus thus changing the nexus state to Online as the remaining is healthy. A new replica is created on either Pool-2-A or Pool-2-B and added to the nexus. The new replica child is rebuilt and eventually the state of the volume returns to Online.

A cluster has three Mayastor nodes deployed, "Node-1", "Node-2" and "Node-3". Each Mayastor node hosts one pool: "Pool-1" on Node-1, "Pool-2" on Node-2 and "Pool-3" on Node-3. No Mayastor volumes have yet been defined; the cluster is 'clean'. A user creates a PVC from a StorageClass which defines a replica count of 2. The control plane determines that it is possible to accommodate one replica within the available capacity of each of Pool-1 and Pool-2, and so the volume is created. An application is deployed on the cluster which uses the PVC, so the volume receives I/O.

Unfortunately, due to user error the SAN LUN which is used to persist Pool-2 becomes detached from Node-2, causing I/O failures in the replica which it hosts for the volume. As with scenario one, the volume state becomes Degraded and the faulted child's becomes Faulted.

Expected Behaviour: Since there is a Mayastor pool on Node-3 which has sufficient capacity to host a replacement replica, a new replica can be created (Rule 2: this 'third' incoming replica isn't located on either of the nodes that the two original ones are). The faulted replica in Pool-2 is retired from the nexus and a new replica is created on Pool-3 and added to the nexus. The new replica is rebuilt and eventually the state of the volume returns to Online.

In the cluster from Scenario three, sometime after the Mayastor volume has returned to the Online state, a user scales up the volume, increasing the num_replicas value from 2 to 3. Before doing so they corrected the SAN misconfiguration and ensured that the pool on Node-2 was Online.

Expected Behaviour: The control plane will attempt to reconcile the difference in current (replicas = 2) and desired (replicas = 3) states. Since Node-2 no longer hosts a replica for the volume (the previously faulted replica was successfully retired and is no longer a member of the volume's nexus), the control plane will select it to host the new replica required (Rule 2 permits this). The volume state will become initially Degraded to reflect the difference in actual vs required redundant data copies but a rebuild of the new replica will be performed and eventually the volume state will be Online again.

A cluster has three Mayastor nodes deployed; "Node-1", "Node-2" and "Node-3". Each Mayastor node hosts two Mayastor pools and currently, no Mayastor volumes have been defined. Node-1 hosts pools "Pool-1-A" and "Pool-1-B", whilst Node-2 hosts "Pool-2-A and "Pool-2-B" and Node-3 hosts "Pool-3-A" and "Pool-3-B". A single volume exists in the cluster, which has a replica count of 3. The volume's replicas are all healthy and are located on Pool-1-A, Pool-2-A and Pool-3-A. An application is using the volume, so all replicas are receiving I/O.

The host Node-3 goes down causing failure of all I/O sent to the replica it hosts from Pool-3-A.

Expected Behaviour: The volume will enter and remain in the Degraded state. The associated child from the replica from Pool-3-A will be in the state Faulted, as observed in the volume through the kubectl-mayastor plugin. Said replica will be removed from the Nexus thus changing the nexus state to Online as the other replicas are healthy. The replica will then be disowned from the volume (it won't be possible to delete it since the host is down). Since Rule 2 dictates that every replica of a volume must be placed on a different Mayastor Node no new replica can be created at this point and the volume remains Degraded indefinitely.

Given the post-host failure situation of Scenario four, the user scales down the volume, reducing the value of num_replicas from 3 to 2.

Expected Behaviour: The control plane will reconcile the actual (replicas=3) vs desired (replicas=2) state of the volume. The volume state will become Online again.

In scenario Five, after scaling down the Mayastor volume the user waits for the volume state to become Online again. The desired and actual replica count are now 2. The volume's replicas are located in pools on both Node-1 and Node-2. The Node-3 is now back up and its pools Pool-3-A and Pool-3-B are Online. The user then scales the volume again, increasing the num_replicas from 2 to 3 again.

Expected Behaviour: The volume's state will become Degraded, reflecting the difference in desired vs actual replica count. The control plane will select a pool on Node-3 as the location for the new replica required. Node-3 is therefore again a suitable candidate and has online pools with sufficient capacity.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Volume snapshots are copies of a persistent volume at a specific point in time. They can be used to restore a volume to a previous state or create a new volume. Mayastor provides support for industry standard copy-on-write (COW) snapshots, which is a popular methodology for taking snapshots by keeping track of only those blocks that have changed. Mayastor incremental snapshot capability enhances data migration and portability in Kubernetes clusters across different cloud providers or data centers. Using standard kubectl commands, you can seamlessly perform operations on snapshots and clones in a fully Kubernetes-native manner.

Use cases for volume snapshots include:

Efficient replication for backups.

Utilization of clones for troubleshooting.

Development against a read-only copy of data.

Volume snapshots allow the creation of read-only incremental copies of volumes, enabling you to maintain a history of your data. These volume snapshots possess the following characteristics:

Consistency: The data stored within a snapshot remains consistent across all replicas of the volume, whether local or remote.

Immutability: Once a snapshot is successfully created, the data contained within it cannot be modified.

Currently, Mayastor supports the following operations related to volume snapshots:

Creating a snapshot for a PVC

Listing available snapshots for a PVC

Deleting a snapshot for a PVC

Deploy and configure Mayastor by following the steps given and create disk pools.

Create a Mayastor StorageClass with single replica.

Create a PVC using steps and check if the status of the PVC is Bound.

Copy the PVC name, for example,

ms-volume-claim.

(Optional) Create an application by following steps.

You can create a snapshot (with or without an application) using the PVC. Follow the steps below to create a volume snapshot:

Apply VolumeSnapshotClass details

Apply the snapshot

To retrieve the details of the created snapshots, use the following command:

To delete a snapshot, use the following command:

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

If all verification steps in the preceding stages were satisfied, then Mayastor has been successfully deployed within the cluster. In order to verify basic functionality, we will now dynamically provision a Persistent Volume based on a Mayastor StorageClass, mount that volume within a small test pod which we'll create, and use the utility within that pod to check that I/O to the volume is processed correctly.

Use kubectl to create a PVC based on a StorageClass that you created in the . In the example shown below, we'll consider that StorageClass to have been named "mayastor-1". Replace the value of the field "storageClassName" with the name of your own Mayastor-based StorageClass.

For the purposes of this quickstart guide, it is suggested to name the PVC "ms-volume-claim", as this is what will be illustrated in the example steps which follow.

If you used the storage class example from previous stage, then volume binding mode is set to WaitForFirstConsumer. That means, that the volume won't be created until there is an application using the volume. We will go ahead and create the application pod and then check all resources that should have been created as part of that in kubernetes.

The Mayastor CSI driver will cause the application pod and the corresponding Mayastor volume's NVMe target/controller ("Nexus") to be scheduled on the same Mayastor Node, in order to assist with restoration of volume and application availabilty in the event of a storage node failure.

In this version, applications using PVs provisioned by Mayastor can only be successfully scheduled on Mayastor Nodes. This behaviour is controlled by the local: parameter of the corresponding StorageClass, which by default is set to a value of true. Therefore, this is the only supported value for this release - setting a non-local configuration may cause scheduling of the application pod to fail, as the PV cannot be mounted to a worker node other than a MSN. This behaviour will change in a future release.

We will now verify the Volume Claim and that the corresponding Volume and Mayastor Volume resources have been created and are healthy.

The status of the PVC should be "Bound".

The status of the volume should be "online".

Verify that the pod has been deployed successfully, having the status "Running". It may take a few seconds after creating the pod before it reaches that status, proceeding via the "ContainerCreating" state.

We now execute the FIO Test utility against the Mayastor PV for 60 seconds, checking that I/O is handled as expected and without errors. In this quickstart example, we use a pattern of random reads and writes, with a block size of 4k and a queue depth of 16.

If no errors are reported in the output then Mayastor has been correctly configured and is operating as expected. You may create and consume additional Persistent Volumes with your own test applications.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The node drain functionality marks the node as unschedulable and then gracefully moves all the volume targets off the drained node. This feature is in line with the node drain functionality of Kubernetes.

To start the drain operation, execute:

kubectl-mayastor drain node <node_name> <label>To get the list of nodes on which the drain operation has been performed, execute:

kubectl-mayastor get drain nodesTo halt the drain operation or to make the node schedulable again, execute:

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

Volume restore from an existing snapshot will create an exact replica of a storage volume captured at a specific point in time. They serve as an essential tool for data protection, recovery, and efficient management in Kubernetes environments. This article provides a step-by-step guide on how to create a volume restore.

To begin, you'll need to create a StorageClass that defines the properties of the snapshot to be restored. Refer to for more details. Use the following command to create the StorageClass:

Note the name of the StorageClass, which, in this example, is mayastor-1-restore.

You need to create a volume snapshot before proceeding with the restore. Follow the steps outlined in to create a volume snapshot.

Note the snapshot's name, for example, pvc-snap-1.

After creating a snapshot, you can create a PersistentVolumeClaim (PVC) from it to generate the volume restore. Use the following command:

By running this command, you create a new PVC named restore-pvc based on the specified snapshot. The restored volume will have the same data and configuration as the original volume had at the time of the snapshot.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

A legacy installation of Mayastor (1.0.x and below) cannot be seamlessly upgraded and needs manual intervention. Follow the below steps if you wish to upgrade from Mayastor 1.0.x to Mayastor 2.1.0 and above. Mayastor uses etcd as a persistent datastore for its configuration. As a first step, take a snapshot of the etcd. The detailed steps for taking a snapshot can be found in the etcd documentation.

As compared to Mayastor 1.0, the Mayastor 2.0 feature-set introduces breaking changes in some of the components, due to which the upgrade process from 1.0 to 2.0 is not seamless. The list of such changes are given below: ETCD:

Control Plane: The prefixes for control plane have changed from /namespace/$NAMESPACE/control-plane/ to /openebs.io/mayastor/apis/v0/clusters/$KUBE_SYSTEM_UID/namespaces/$NAMESPACE/

Data Plane: The Data Plane nexus information containing a list of healthy children has been moved from

In order to start the upgrade process, the following previously deployed components have to be deleted.

To delete the control-plane components, execute:

Next, delete the associated RBAC operator. To do so, execute:

Once all the above components have been successfully removed, fetch the latest helm chart from and save it to a file, say helm_templates.yaml. To do so, execute:

Next, update the helm_template.yaml file, add the following helm label to all the resources that are being created.

Copy the etcd and io-engine spec from the helm_templates.yaml and save it in two different files say, mayastor_2.0_etcd.yaml and mayastor_io_v2.0.yaml. Once done, remove the etcd and io-engine specs from helm_templates.yaml. These components need to be upgraded separately.

Install the new control-plane components using the helm-templates.yaml file.

Verify the status of the pods. Upon successful deployment, all the pods will be in a running state.

Verify the etcd prefix and compat mode.

Verify if the DiskPools are online.

Next, verify the status of the volumes.

After upgrading control-plane components, the data-plane pods have to be upgraded. To do so, deploy the io-engine DaemonSet from Mayastor's new version.

Using the command given below, the data-plane pods (now io-engine pods) will be upgraded to Mayastor v2.0.

Delete the previously deployed data-plane pods (mayastor-xxxxx). The data-plane pods need to be manually deleted as their update-strategy is set to delete. Upon successful deletion, the new io-engine pods will be up and running.

NATS has been replaced by gRPC for Mayastor versions 2.0 or later. Hence, the NATS components (StatefulSets and services) have to be removed from the cluster.

After control-plane and io-engine, the etcd has to be upgraded. Before starting the etcd upgrade, label the etcd PV and PVCs with helm. Use the below example to create a labels.yaml file. This will be needed to make them helm compatible.

Next, deploy the etcd YAML. To deploy, execute:

Now, verify the etcd space and compat mode, execute:

Once all the components have been upgraded, the HA module can now be enabled via the helm upgrade command.

This concludes the process of legacy upgrade. Run the below commands to verify the upgrade,

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

For basic test and evaluation purposes it may not always be practical or possible to allocate physical disk devices on a cluster to Mayastor for use within its pools. As a convenience, Mayastor supports two disk device type emulations for this purpose:

Memory-Backed Disks ("RAM drive")

File-Backed Disks

Memory-backed Disks are the most readily provisioned if node resources permit, since Mayastor will automatically create and configure them as it creates the corresponding pool. However they are the least durable option - since the data is held entirely within memory allocated to a Mayastor pod, should that pod be terminated and rescheduled by Kubernetes, that data will be lost. Therefore it is strongly recommended that this type of disk emulation be used only for short duration, simple testing. It must not be considered for production use.

File-backed disks, as their name suggests, store pool data within a file held on a file system which is accessible to the Mayastor pod hosting that pool. Their durability depends on how they are configured; specifically on which type of volume mount they are located. If located on a path which uses Kubernetes ephemeral storage (eg. EmptyDir), they may be no more persistent than a RAM drive would be. However, if placed on their own Persistent Volume (eg. a Kubernetes Host Path volume) then they may considered 'stable'. They are slightly less convenient to use than memory-backed disks, in that the backing files must be created by the user as a separate step preceding pool creation. However, file-backed disks can be significantly larger than RAM disks as they consume considerably less memory resource within the hosting Mayastor pod.

Creating a memory-backed disk emulation entails using the "malloc" uri scheme within the Mayastor pool resource definition.

The example shown defines a pool named "mempool-1". The Mayastor pod hosted on "worker-node-1" automatically creates a 64MiB emulated disk for it to use, with the device identifier "malloc0" - provided that at least 64MiB of 2MiB-sized Huge Pages are available to that pod after the Mayastor container's own requirements have been satisfied.

The pool definition caccepts URIs matching the malloc:/// schema within its disks field for the purposes of provisioning memory-based disks. The general format is:

malloc:///malloc<DeviceId>?<parameters>

Where <DeviceId> is an integer value which uniquely identifies the device on that node, and where the parameter collection <parameters> may include the following:

Note: Memory-based disk devices are not over-provisioned and the memory allocated to them is so from the 2MiB-sized Huge Page resources available to the Mayastor pod. That is to say, to create a 64MiB device requires that at least 33 (32+1) 2MiB-sized pages are free for that Mayastor container instance to use. Satisfying the memory requirements of this disk type may require additional configuration on the worker node and changes to the resource request and limit spec of the Mayastor daemonset, in order to ensure that sufficient resource is available.

Mayastor can use file-based disk emulation in place of physical pool disk devices, by employing the aio:/// URI schema within the pool's declaration in order to identify the location of the file resource.

The examples shown seek to create a pool using a file named "disk1.img", located in the /var/tmp directory of the Mayastor container's file system, as its member disk device. For this operation to succeed, the file must already exist on the specified path (which should be FULL path to the file) and this path must be accessible by the Mayastor pod instance running on the corresponding node.

The aio:/// schema requires no other parameters but optionally, "blk_size" may be specified. Block size accepts a value of either 512 or 4096, corresponding to the emulation of either a 512-byte or 4kB sector size device. If this parameter is omitted the device defaults to using a 512-byte sector size.

File-based disk devices are not over-provisioned; to create a 10GiB pool disk device requires that a 10GiB-sized backing file exist on a file system on an accessible path.

The preferred method of creating a backing file is to use the linux truncate command. The following example demonstrates the creation of a 1GiB-sized file named disk1.img within the directory /tmp.

This website/page will be End-of-life (EOL) after 31 August 2024. We recommend you to visit OpenEBS Documentation for the latest Mayastor documentation (v2.6 and above).

Mayastor is now also referred to as OpenEBS Replicated PV Mayastor.

The Mayastor pool metrics exporter runs as a sidecar container within every io-engine pod and exposes pool usage metrics in Prometheus format. These metrics are exposed on port 9502 using an HTTP endpoint /metrics and are refreshed every five minutes.

When is activated, the stats exporter operates within the obs-callhome-stats container, located in the callhome pod. The statistics are made accessible through an HTTP endpoint at port 9090, specifically using the /stats route.

To install, add the Prometheus-stack helm chart and update the repo.